Research

Vision

My research focus is computational analog systems that embed intelligence in the physical environment to increase spectral efficiency of communications and resolution of sensing. Currently, the physical environment is the fundamental barrier to wireless systems. Radars cannot see through objects or around corners, and communication links degrade the moment direct signal paths are blocked by walls or obstacles. For decades, researchers have improved endpoints like phones and radars with more antennas, power, and complex digital processing. However, endpoints can only shape own transmissions, not the propagation environment.

Instead of treating the environment as an adversary, my work transforms it into an active, intelligent part of the system. Strategically deployed on buildings and roadsides, my embedded hardware maintains connectivity through walls, reflect signals around corners, and detects hidden objects by sensing scattered reflections. My devices move digital processing workload to analog, performing computation directly on physical signals and manipulating them almost instantaneously with minimal power.

Human-Eye-Inspired Metamaterial Sensor to See Blind Spots

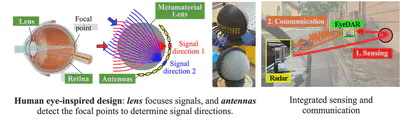

mmWave radars work by sending out mmWave signals and capturing signals reflected from targets. However, most signals reflect away from radars like light bouncing off a mirror, creating many blind spots. To “see" the blind spots, I developed and built EyeDAR (HotMobile ’26), a computational metamaterial lens that mounts on roadsides to capture lost radar reflections and increase the areas that a radar can see. I made two key innovations:

(1) A human-eye-inspired design: As the human eye uses a lens to focus light and a retina to detect focal points, EyeDAR uses a Luneburg lens, a spherical metamaterial lens that focuses incoming signals from any direction onto a focal point on the opposite surface. Antennas surrounding the lens detect these focal points to determine the direction each reflection came from, thereby locating reflector objects. By embedding computation directly into its physical structure, EyeDAR determines signal directions 200× faster than digital processing.

(2) Integrated sensing and communication: EyeDAR is a sensor that also talks to the radar. It first senses, capturing radar reflections from blind spots, and then communicates, reporting what it sees back to the radar by modulating incoming radar signals. Like sending Morse code, EyeDAR switches between absorbing incoming signals and reflecting them back to the radar, sending object locations without generating new transmissions. Demo videos are available in: https://www.youtube.com/watch?v=m3Kude-pxTI. This work was covered by Rice News.

Metamaterial Systems for Reliable mmWave Communications

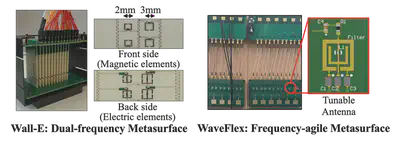

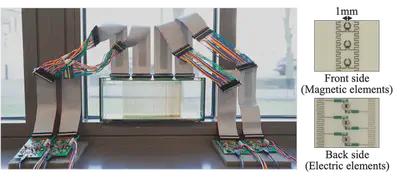

High-frequency millimeter-wave (mmWave) signals (24-71 GHz) provide multi-Gbit/sec data rates from their massive bandwidth. However, they cannot penetrate walls and are easily blocked by obstacles, creating dead zones in both indoor and outdoor environments. I developed programmable surfaces that mount on walls and vehicles to control how mmWave signals propagate. Think of them as smart walls that can make themselves transparent like glass or reflective like mirror to radio waves on demand. They consist of over 4,000 tiny programmable metamaterials, artificially engineered elements that manipulate radio waves. Acting like a microscopic array of adjustable mirrors, these elements instantaneously control how signals bend. By electronically configuring them, a single surface can create new paths through itself, reflect signals around obstacles, and shape beams in complex patterns.

mmWall (NSDI ‘23, HotMobile ‘21) is the first steerable metamaterial surface that can refract mmWave signals through itself or reflect them around obstacles. mmWall dynamically switches between two modes: (1) glass mode: steering outdoor signals through the surface to reach indoor users, and (2) mirror mode: reflecting signals around obstacles to reach blind spots. The core innovation is a novel, see-through 3D structure. mmWall has horizontally stacked ribs that manipulate signals as they propagate through the 3D structure itself. Unlike repeaters that receive, decode, and retransmit entire packets, mmWall simply redirects passing waves, bypassing the complexity and latency of digital processing. I co-designed a link-layer protocol that leaves existing cellular and Wi-Fi systems unchanged. Designed and custom-built over three years by me, the tablet-sized (10×20 cm) prototype steers over a 320° range with 86% maximum efficiency, while consuming hundreds of microwatts of power. It achieves 29-30 dB maximum gain, ensuring >20 dB signal strength across an entire 10×8 m room, eliminating dead zones caused by wall blockage. This work was highlighted in media outlets, including Princeton News and TechXplore. Demo videos are available in: https://youtu.be/vEQYQPOq1qw.